Towards the end of the webinar I mentioned that currently only 27 of the fields from the Revenu Québec form are included in the automation. Even though I built my AI Builder Form Processing model with 34 fields, only 27 fields were displayed in the Document Automation Application Canvas app. This is because only a maximum of 27 field have been defined in the Documentation Automation Toolkit. I said I'd be sharing soon how to extend the Document Automation Toolkit to include all fields. Well that time has come and this is what you'll learn in this #WTF episode 😊

To include the additional 7 fields (34 - 27 = 7), three components need to be updated in the Document Automation Toolkit solution.

The Document Automation Data table needs to be updated so that it can reference the additional 7 fields. There are two sets of fields that need to be created. The first set of fields are for the Data values, the other set of fields are for the confidence score (displayed as a percentage) when the AI Builder Form Processing model extract the information based on the mapped fields defined in the model.

The Document Automation Processor cloud flow needs to be updated so that the new fields in the Document Automation Data table are updated. This is so that

The Document Automation Application Canvas app needs to be updated so that two screens in the app display the 7 fields.

The second screen is used by end users who will be manually reviewing the data extracted by the AI Builder Form Processing model with the form uploaded.

Steps for extending the Document Automation Toolkit

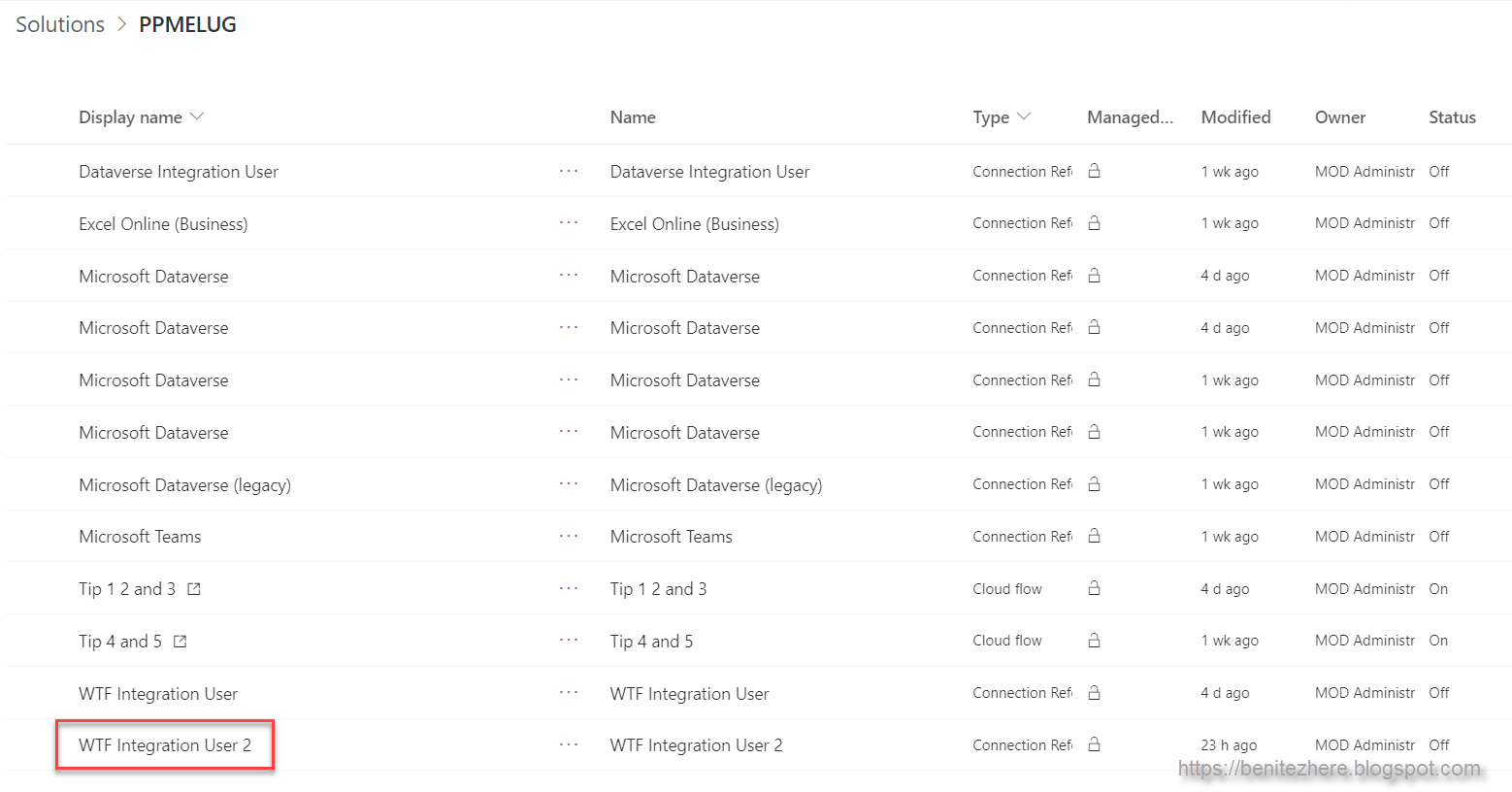

Step 1 - Create a new solution and add the components

A new solution needs to be created so that the components can be configured. This is because the Document Automation Toolkit is a managed solution and if you make change directly in this managed solution, it will create an unmanaged layer.

In the new solution add the components from the Document Automation Toolkit solution. In my #WTF episode I added the following components.

Canvas app

Document Automation Application

Dataverse tables

Document Automation Configuration

Document Automation Data

Document Automation Processing

Document Table Data

Document Automation Table Taxonomy

Document Automation Taxonomy

Power Automate cloud flows

Document Automation Email Importer

Document Automation Processor

Document Automation Validator

Now when I think about it, I only needed to add the three components I outlined in the previous section rather than all of the components in the original Document Automation Toolkit solution 😂

Step 2 - Add columns to the Document Automation Data table

Depending on the number of fields in your form, the difference needs to be created in this table.

In my scenario I had a total of 34 fields I was automating. The difference between the 27 fields and the total of 34 fields is 7 fields. I needed to created 7 fields for the Data and 7 fields for the Accuracy Percentage.

I created columns Data28 to Data34. This is the value of the data extracted from the form as defined by the field mapping in the AI Builder Form Processing model.

I created columns Metadata28 to Metadata34. This is the confidence score from the AI Builder Form Processing model of the value extracted from the form.

Step 3 - Update the Document Automation Processor cloud flow

One of the core actions in this cloud flow stores the extracted data in the Document Automation Data table. With the columns added in Step 2, the respective fields in the action also need to be updated. Browse to the Document Automation Processor cloud flow and click Edit. Scroll down to the "Create document processing data" action and again, scroll down until you see the new columns added in Step 2.

In my scenario it's Data28 - Data34. Click on Data27 and copy its expression. Click on Data 28 and paste the expression into this field. Update the expression to reference Data28 column.

variables('DataDictionary')?['Data28']

Repeat for the remaining DataXX columns you created in Step 2.

Next, apply the same steps to the Medtadata columns created in Step 2. Click on Metadata27 and copy its expression. Click on Metadata28 and paste the expression into this field. Update the expression to reference Metadata 28 column.

variables('DataDictionary')?['Data28_confidence']

Repeat for the remaining MetadataXX columns you created in Step 2.

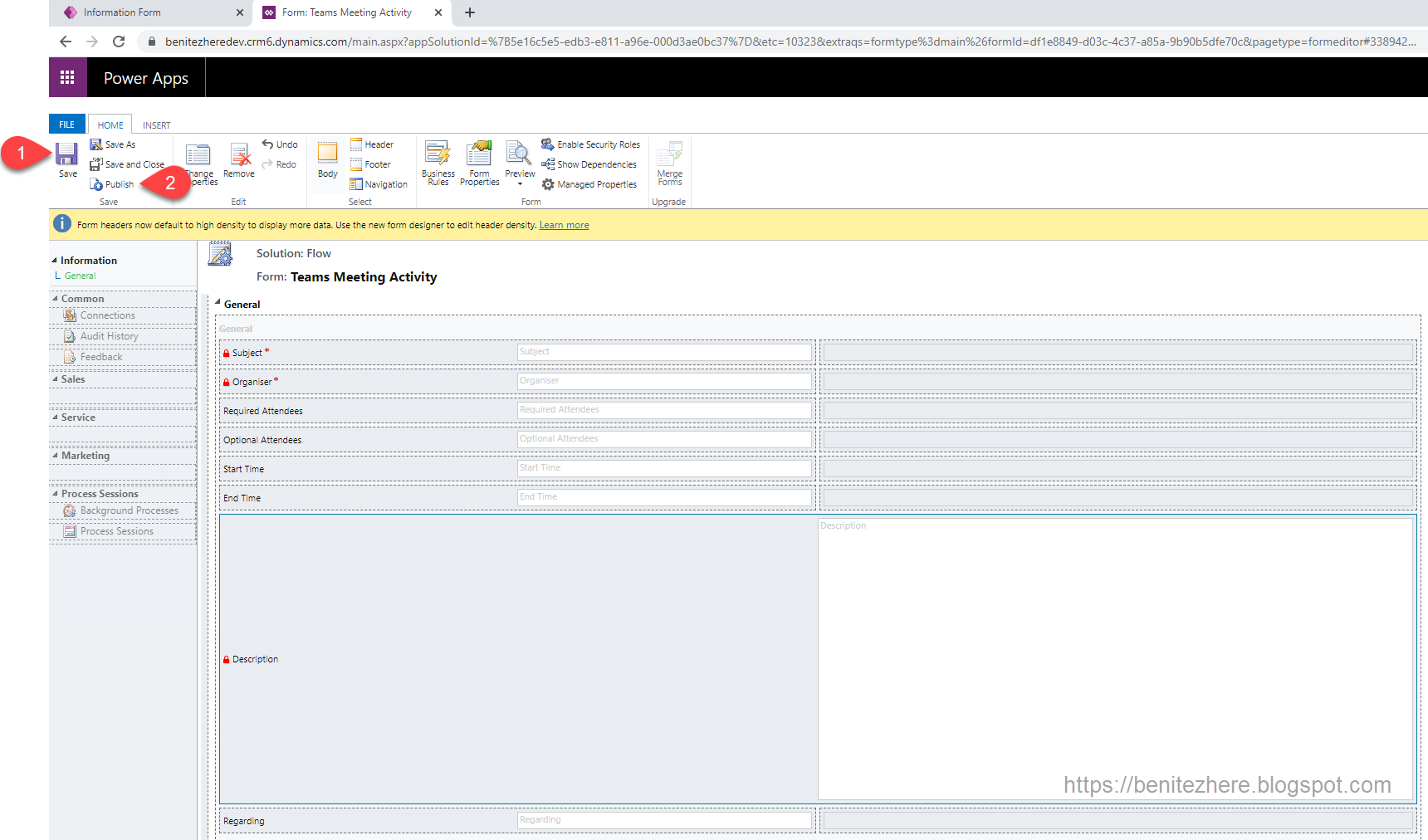

Step 4 - Update the Document Automation Application Canvas app

There are several controls that need to be updated in the Document Automation Application Canvas app.

Fields mapping screen

1. Expand the Fields mapping screen.

2. Select the Hidden Mapping Refresh Button.

3. Select the OnSelect property as the formula needs to be updated.

4.Expand the formula bar and scroll down to the Patch function to update the JSON.

5. Copy an existing row such as Data26.

6. Enter a new line, followed by pasting the content.

7. Update the Name, Index and remaining functions to reference 28.

{

Name: "Data28", Index: 28, 'Document Automation Configuration': CurrentConfiguration,

'Mapped Column': If(NbLabels >= 28, Last(FirstN(ModelKeysCollection, 28)).label)

},

Repeat for the remaining DataXX columns you created in Step 2.

This will display the DataXX columns created in Step 2 in the screen for the end users who will be configuring the Document Automation Toolkit.

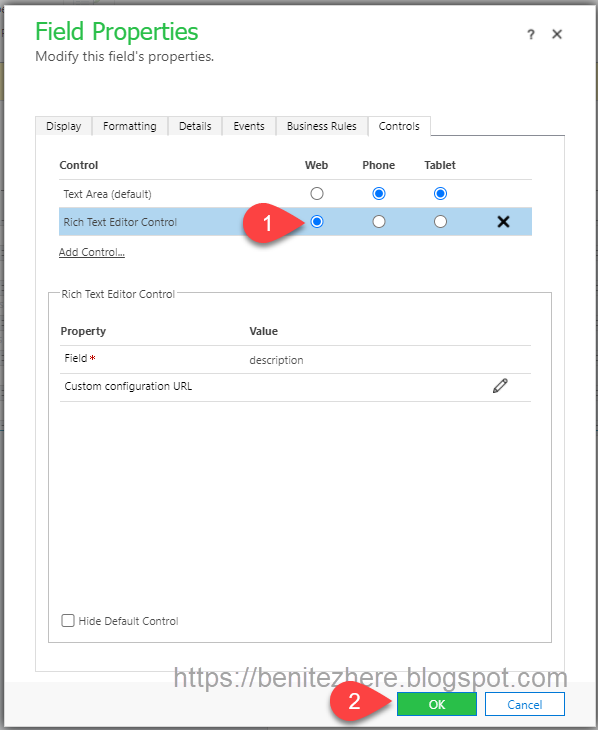

Document Detail Screen

1. Expand the Document Detail Screen.

2. Click the Document Header Form. If you scroll down, you'll see that there are DataCards for the 27 columns. New DataCards need to be added to the Document Header Form control to display the DataXX columns and MetadataXX columns created in Step 2.

3. Click on Edit fields.

4. Click on Add field.

5. Search for Data28.

6. Select Data28.

7. Click Add.

A DataCard control will now be added to the Document Header Form. Scroll down to see it.

8. Unlock the DataCard for Data28

9. Select all the controls within the DataCard and delete them. Yes, delete them - don't worry! Trust me!

10. Select one of the existing DataCards such as Data26_DataCard1.

11. Copy all of the three controls.

12. Select Data28_DataCard1 control and paste (hit CTL + P on your keyboard). The three controls will now appear.

13. Update the name of the first control to reference 28 - DataCardConfidence28

14. Update the name of the second control to reference 28 - DataCardValue28

15. Update the name of the third control to reference 28 - DataCardKey28

16. Select one of the existing DataCards such as Data26_DataCard1.

17. Select the DisplayName property.

18. Copy the formula.

19. Select Data28_DataCard1 control.

20. Select the DisplayName property.

21. Paste the formula.

22. Update the reference to 28.

23. Select the DataCardConfidence28 control.

24. Select the Text property.

25. Update the formula to reference Metadata28.

25. Rearrange the placement of the controls in the DataCard to to be in alignment to the other DataCards. Repeat for the remaining DataXX columns created in Step 2.

26. Save the Canvas app.

27. Publish the Canvas app.

That's it - that's how you extend the Document Automation Toolkit! Awesome sauce 😊

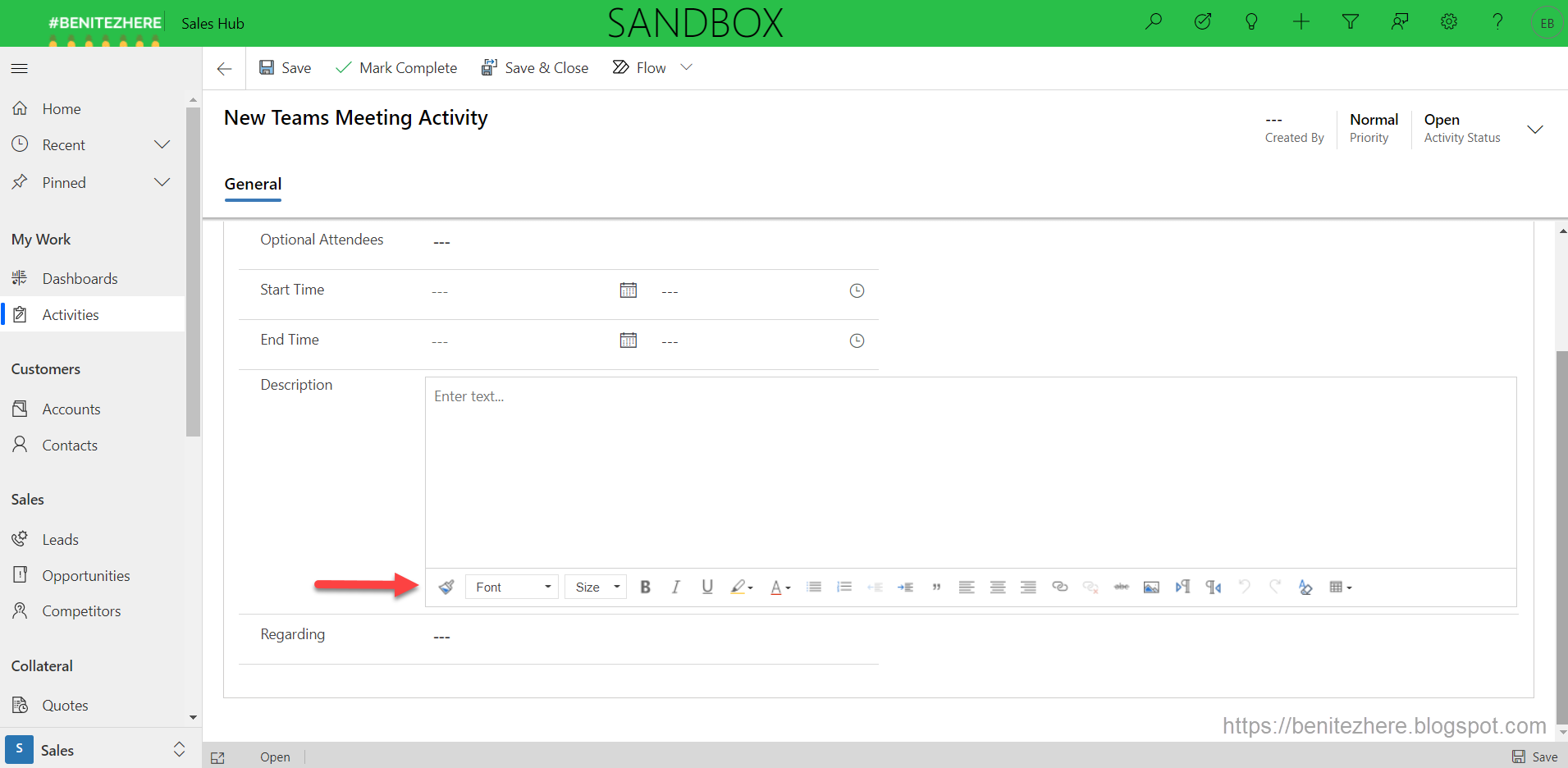

Document Automation Toolkit in action

Trigger the automation by sending an email to the Inbox email address configured in the cloud flow.

As manual reviewer, load the Document Automation Application Canvas app and you'll now see the additional fields 🙂

Summary

If you need to display all the fields that you've defined and mapped in your AI Builder Automation Form Processing model in the app that comes with Document Automation Toolkit solution, follow the steps above. By adding the columns beyond the 27 default fields, updating the cloud flow and the Canvas app extends the Document Automation Toolkit to suit your requirements.

Until next time #LetsAutomate