What is Microsoft Graph API?

Microsoft Graph API is an access point for all your data across your applications and services in your Microsoft cloud.

Previously there were different SDKs with their own security, messaging and data format requirements - there was inconsistency and the learning curve for developers was a challenge. Examples are OneDrive for Business, Outlook, Azure Active Directory, Discovery Service and so forth. In Microsoft Graph the APIs are centralised providing a standardised structure for applications to be built on top of the different services also provides capabilities in extending the experience of the application or service such as Microsoft Teams. This allows developers to build with reduced effort compared to the previous way of having to learn the different SDKs.

Authentication with Microsoft Graph API

There are two types

- Delegated permissions which requires user consent

- Application permissions which requires admin consent

Today with the connectors in flow within Power Automate, the connector authenticates through a user account. An example is the Office 365 Users connector, as the flow maker it will use your user account as the credentials and you are required to give consent for the Microsoft Graph API to authenticate as you. Below is a screenshot that shows how the authentication method is of a user account.

For Microsoft Dataverse, there's the method of authenticating as a Service Principal Account which is essentially an application user. The Service Principal Account can be used as the authentication method for the CDS current environment connector. But what about the other connectors such as the Office 365 User connector I mentioned?

This is where you would need to do some investigation to find what option is suitable for your organisation and what you're trying to achieve with flow in Power Automate. One option is to authenticate as an application which is what I'll be sharing in this WTF episode.

This WTF episode is a side explanation to a webinar I hosted in the week of Thanksgiving 2020 where I demonstrated how the Power Platform can be used to support a hybrid workforce of teams working from the office or remotely. I go give an overview on the following,

- How a Canvas app with a PCF control can be used to allow employee to communicate where they are working from for the week and if working from the office, they can select a floor and desk of where to sit

- How to change the look and feel of cards in Microsoft Lists using JSON

- How a manager can interact with a bot through Power Virtual Agents in Microsoft Dataverse for Teams where a flow does the magic to display the list of employees back to the manager - this is where the authenticate as an application with Microsoft Graph API is use

You can watch the webinar recording here.

Part 1 - Create an app registration

To authenticate as an application with the Microsoft Graph API, an app registration needs to be created which can be done in the Microsoft Azure portal. I did cover this in a previous WTF episode but I'll run through it again.

Log into Azure portal, click on App Registrations and click on +New Registration. If you don't see the following below when you log into the Azure portal, search for App Registration.

Enter a Name and select whether you want a single tenant or multiple tenant followed by the register button. Refer to the table in this docs.microsoft.com article that explains the differences of the account options.

Once created you'll see details of the App Registration which will be referenced in the connector in flow within Power Automate.

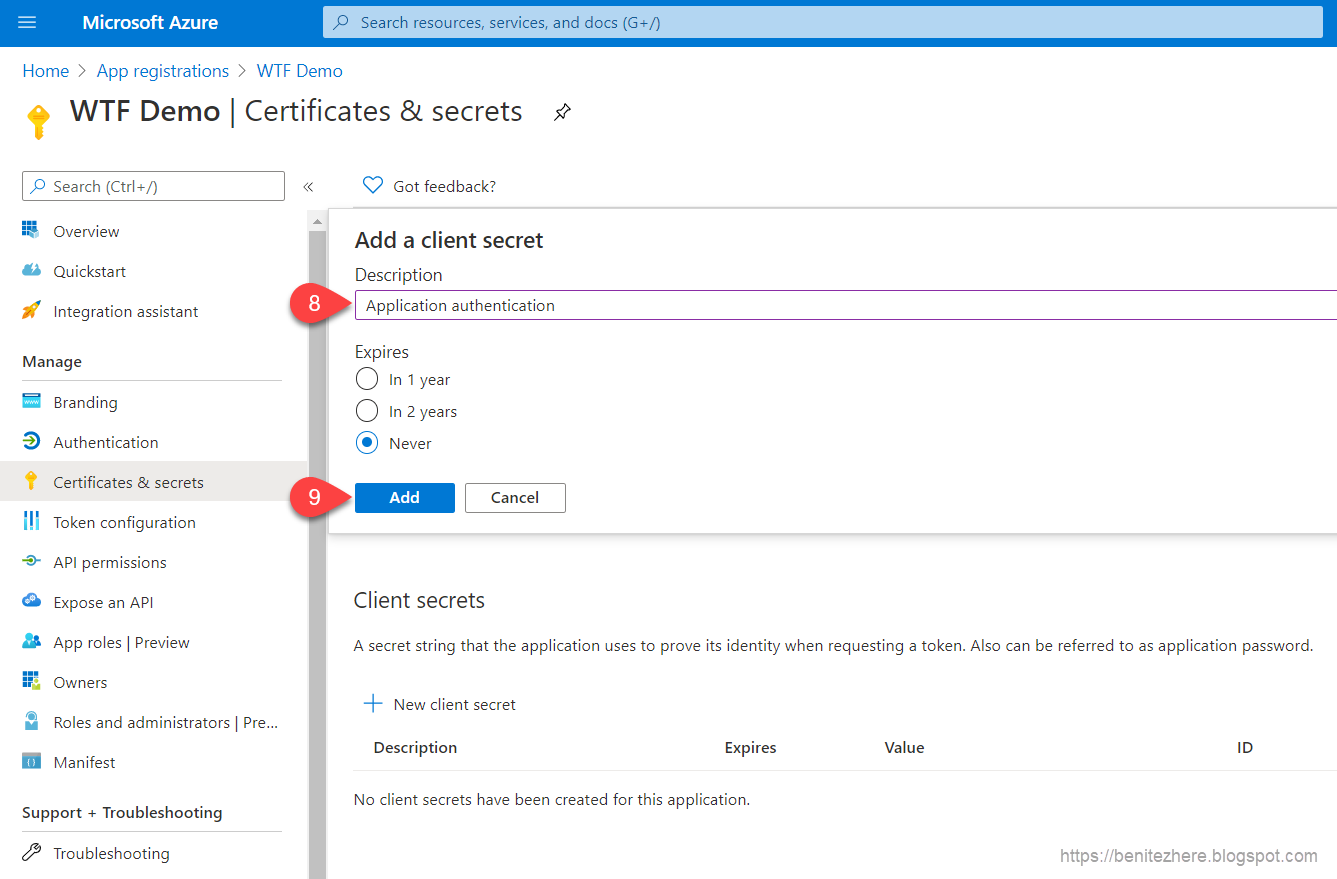

Two more items need to be configured afterwards. The first one I showed in my vlog was to create a Client Secret which will be for the security of a connector in flow within Power Automate. Head to Certificates & secrets, click +New client secret.

Enter a name for the Client Secret. Select the a suitable option for the expiry setting and then click add.

The Client Secret will be created. Copy the Client Secret value and save it some where as this will be used when configuring the authentication of a connector in flow within Power Automate.

The second configuration is to enable API permissions for Microsoft Graph API. The documentation available for Microsoft Graph API is great because each API request has a Permissions section that outlines the permission required for the delegated or application permissions. The example I used in my vlog was the List directReports API request where it outlines the permissions for the application permission. I used the "User.ReadWrite.All" permission.

Head over to API permissions and click on +Add a permission and select the Microsoft Graph option.

Select Application permissions.

Search for the User.ReadWrite.All permission to select it an click on Add permissions. A message will appear that confirms the permissions has been added and it will be listed.

Next grant admin consent and that will enable the API permission for the application.

For further reading on app registration, this docs.microsoft.com article provides some further explanation on what is an App Registration.

Part 2 - Using the app registration in flow within Power Automate to authenticate as an application with Microsoft Graph API

The connector to use in flow within Power Automate is the HTTP connector. This is a premium connector so you would need to ensure that you have the relevant licensing that allows you to use premium connectors.

The following is what would be configured in the HTTP action.

1 - The API request method. This is defined by the API request in the documentation for Microsoft Graph API. In my vlog I was calling the List directReports API request and the method defined in the documentation is GET.

2 - The API request as defined in the documentation is what would be inserted in this field of the action. Again, refer to the Microsoft API documentation for the API request URI that you are using. In my use case I am retrieving the list of employees that report to a specific manager so I am using the second option listed in the documentation.

The parameter id is the Object ID of the user record in Azure Active Directory and the other parameter that can be used is the userPrincipalName which is also in the user record in Azure Active Directory.

You will also notice in my screenshot that I've also referenced a select statement which will only retrieve the two properties specified which are displayName and userPrincipalName.

3 - This is using the standard definition of application/JSON as the content-Type value.

4 - Authentication is Active Directory OAuth to reference the app registration in Microsoft Azure portal.

5 - This is the Tenant ID from the app registration in Microsoft Azure portal.

6 - This is the Base Resource URI of Microsoft Graph API.

7 - This is the Client ID from the app registration in Microsoft Azure portal.

8 - The option to use is Secret.

9 - This is the Client Secret from the app registration.

Flow in action

Once the flow has been configured you're good to go in testing your HTTP action authenticating as an application with the Microsoft Graph API.

What I did demonstrate in my vlog was how the response returned as an application will be the same as the response returned through user authentication.

Summary

To authentication as an application in flow within Power Automate you can use the HTTP connector and reference details of an Azure app registration. The connectors available (such as Office 365 Users) would not be used since they authenticate as a user whereas with the HTTP connector you can define the authentication for an application to interact with the Microsoft Graph API.

If you can't grant admin rights in Microsoft Azure portal it could be it's not accessible/not allowed for some users in your organisation, check out this docs.microsoft.com documentation. Big shout out to Audrie Gordon for the tip! 💡 Subscribe to her YouTube channel, she has great content.

If you can't grant admin rights in Microsoft Azure portal it could be it's not accessible/not allowed for some users in your organisation, check out this docs.microsoft.com documentation. Big shout out to Audrie Gordon for the tip! 💡 Subscribe to her YouTube channel, she has great content.

Catch you in the next #WTF episode